Start the slave from your Spark’s folder with. There is a continuous development of Apache Spark. To install Java, open a terminal and run the following command : sudo apt-get install default-jdk Steps to Install latest Apache Spark on Ubuntu 16 1. sbin/start-master.sh.Ĭheck if there were no errors by opening your browser and going to you should see this: Java is the only dependency to be installed for Apache Spark. Start the master from your Spark’s folder with. # -XX:+PrintGCDetails -Dkey=value -Dnumbers="one two three" # This is useful for setting default environmental settings. # Default system properties included when running spark-submit. # See the License for the specific language governing permissions and

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # distributed under the License is distributed on an "AS IS" BASIS,

APT INSTALL APACHE SPARK SOFTWARE

# Unless required by applicable law or agreed to in writing, software

APT INSTALL APACHE SPARK HOW TO

The following steps show how to install Apache Spark. Therefore, it is better to install Spark into a Linux based system. # (the "License") you may not use this file except in compliance with Apache Spark - Installation, Spark is Hadoop s sub-project. # The ASF licenses this file to You under the Apache License, Version 2.0 # this work for additional information regarding copyright ownership. HI guys, This time, I am going to install Apache Spark on our existing Apache Hadoop 2.7.0. # Licensed to the Apache Software Foundation (ASF) under one or more Navigate to the Apache Spark’s folder with cd /path/to/spark/folder.Įdit conf/nf file with the following configuration: 1 A Kafka cluster is highly scalable and fault-tolerant. Apache Kafka is a popular distributed message broker designed to handle large volumes of real-time data. These commands are valid only for the current session, meaning as soon as you close the terminal they will be discarded in order to save these commands for every WSL session you need to append them to ~/.bashrc.Ĭreate a tmp log folder for Spark with mkdir -p /tmp/spark-events. The author selected the Free and Open Source Fund to receive a donation as part of the Write for DOnations program.

APT INSTALL APACHE SPARK CODE

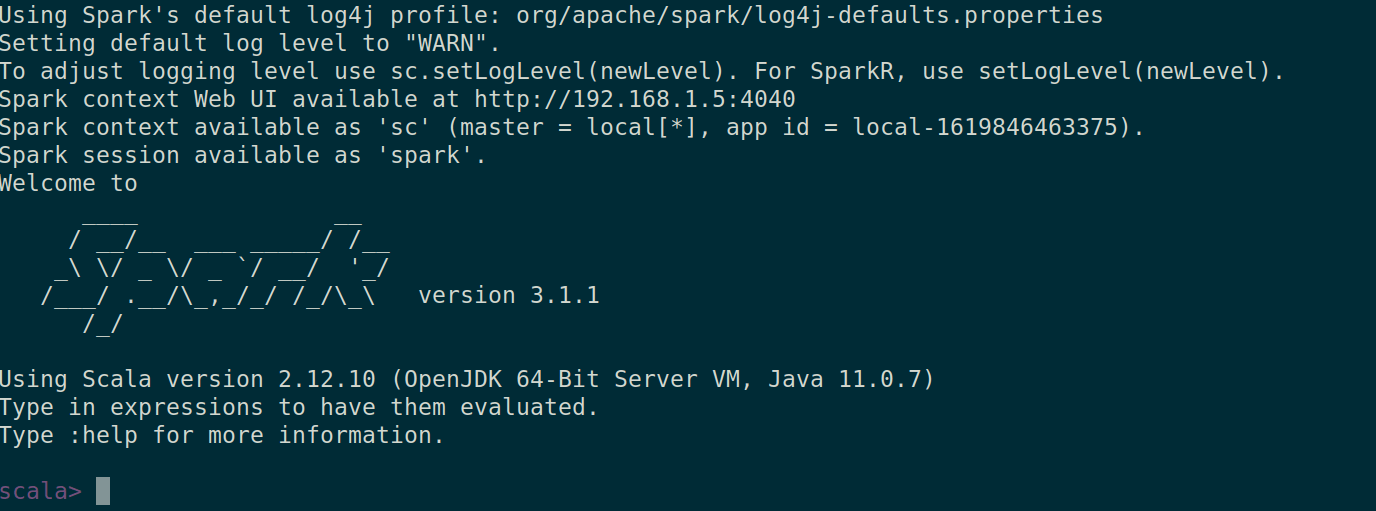

Open WSL either by Start>wsl.exe or using your desired terminal. The code below will install and configure the environment with lates Spark version 2.4.5 apt - get install openjdk - 8 - jdk - headless - qq > / dev / null Run the cell. Apache Sparkĭownload Apache-Spark here and choose your desired version.Įxtract the folder whenever you want, I suggest placing into a WSL folder or a Windows folder CONTAINING NO SPACES INTO THE PATH (see the image below). Install maven with your OS’ package manger, I have Ubuntu so I use sudo apt install maven.

Install JDK following my other guide’s section under Linux, here.